Abstract

The Digital Humanities project Florence As It Was (http://florenceasitwas.wlu.edu) seeks to reconstruct the architectural and decorative appearance of late Medieval and early Modern buildings by combining 3D point cloud models of buildings (i.e. extant structures such as chapels, churches, etc.) with 3D rendered models of artworks that were installed inside them during the fourteenth and fifteenth centuries. This paper documents a novel bifurcated workflow that allows the construction of such integrated 3D models as well as an example case study of a church in Florence, Italy called Orsanmichele (https://3d.wlu.edu/v21/pages/orsanmichele2.html). The key steps worked out in the optimized workflow include: (1) art historical research to identify the original artworks in each building, (2) the use of LiDAR scanners to obtain 3D data (along with associated color information) of the interiors and exteriors of buildings, (3) the use of high resolution photogrammetry of works of art (i.e. paintings and sculptures) which have been removed from those buildings and are now in public collections, (4) the generation of point clouds from the 3D data of the buildings and works of art, (5) the editing and cojoining of a textured polygon model of artworks with a reduced size (using novel algorithms) point cloud model of the buildings in an open-source software tool called Potree so that artworks can be embedded in their original architectural settings, and (6) the annotation of these models with scholarly art historical texts that present viewers immediate access to information, archival evidence, and historical descriptions of these spaces. The integrated point cloud and textured models of buildings and artworks, respectively, plus annotations are then published with Potree. This process has resulted in the development of highly accurate virtual reconstructions of key monuments from the Florentine Middle Ages and Renaissance (like the fourteenth century building of Orsanmichele and the multiple paintings that were once inside it) as they originally appeared. The goal of this project is to create virtual models for scholars and students to explore research questions while providing key information that may assist in generating new projects. Such models represent a significant tool to allow improved teaching of art and architectural history. Furthermore, since the assigned location of some of the historic artworks within these sites are not always firmly known, the virtual model allows users to experiment with potential arrangements of objects in and on the buildings they may have originally decorated.

Similar content being viewed by others

Introduction

Digital technologies have been employed to create virtual 3D models of geological formations, building sites, and simple objects since the 1980s [1,2,3,4]. These 3D models are constructed from data collected using techniques such as laser scanning, LiDAR, structured light, and photogrammetry. A data processing pipeline transforms the data from the initial generated point clouds to meshes to the final rendered (textured) 3D models with which users can interact in a viewing platform.

While the process of creating textured polygon models using photogrammetry dates to the 1980s, the employment of photogrammetry as a tool for the capturing and rendering of artworks is a more recent development, with its origins postdating the turn of the twenty-first century [5]. Additionally, the technical processes involved in using LiDAR equipment to scan architectural structures, edit individual scans, and stitch together laser-captured forms were established only recently [6,7,8,9,10,11]. Since 2005, pipelines have been established to create accurate point cloud models of important sites such as the basilica of S. Francesco in Assisi, the Medieval wall of Avila, the Roman Forum in Pompeii, and multiple structures produced during the early modern period in Florence, to name but a few [12,13,14,15]. More recently, specialists have combined photogrammetric models with point clouds for research purposes, layering the former over the latter in order to place textured surfaces on top of spatially accurate models produced with LiDAR equipment [16,17,18,19]. An important aspect of the traditional model construction workflow has been the time-consuming process of editing collected scans and images to eliminate tangential data that interferes with the model construction and the combining of individual point clouds to create an accurate representation of the structure.

Museum have been collaborating with imaging scientists and art historians to create 3D digital models of their collections through 3D modeling, and the medium of sculpture usually receives the bulk of the attention [20, 21]. Color 3D models of individual artworks are usually of modest size, such that they can be hosted on websites for scholars and the public to directly interact with the 3D models. They are usually controlled either by the owner of the original object or by the group responsible for its reproduction, often via a platform such as Sketchfab [22,23,24]. Similarly, the proprietors of cultural heritage sites have invited 3D imaging specialists into their built environments to scan their spaces to document their features for monitoring and historical purposes [25]. However, because they have been produced for reasons of research, preservation, and future restoration, these 3D models are usually made available only for the explicit use by museums, conservation experts, academic researchers, architects, and skilled craftsmen [26, 27]. For years, neither scholars nor the general public had the opportunity to examine point cloud models of important cultural heritage sites due to the unavailability of software programs that permitted users to navigate through models that were too large to be read on even well-equipped computers in the public sector. However, the ability to make available to general users point cloud models of both culturally significant architectural spaces and of the artworks that once decorated them has improved recently; it is now possible to combine both forms into a single model to reconstruct the possible original arrangement of the internal spaces.

Some European museums have experimented with combining 3D models of artworks and historic sites, although with significant limitations. The National Gallery of Art in London, England produced a model of the demolished Florentine church of S. Pier Maggiore by inserting into a textured model of a point cloud of the building a pair of polygon models of paintings in the museum’s collection. This model was featured in a short video produced in 2015 that ultimately resulted in the formation of a Digital Humanities project called Florence4D (designed by Donal Cooper at Cambridge University and Fabrizio Nevola at Exeter University); however, in its current form, their model of S. Pier Maggiore cannot be accessed by the public [28]. In 2017 the Museo di San Marco rendered a reconstruction of the headquarters of the Florentine Linen Workers Guild and inserted into their model the museum’s large tabernacle of the Madonna del Linaiuolo by Lorenzo Ghiberti and Fra Angelico that was installed in the guildhall in 1434. Used for didactic purposes only, the model was never published. In a similarly unpublished venture, the Museo Civico and Bishop’s Palace in Pistoia created a 3D reconstruction of their cathedral that included a model of the original high altarpiece from that space. Visitors could see the model while standing in the exhibition space, but could not access it from home. Clearly, the combination of models of both buildings and the artworks that originally decorated them is a promising venture in the documentation and exploration of cultural heritage. However, none of these earlier models are accessible to the public for private scrutiny, making them limited for the purpose of further study beyond their stunning visual qualities.

This paper presents a novel 3D reconstruction methodology developed by Florence As it Was that embeds textured 3D models of artworks into LiDAR-created point cloud models of the buildings in which those artworks were originally installed through the use of an open-source software called Potree. This workflow skips the labor-intensive effort of generating textured models of large-scale point clouds of buildings. This simplification is crucial for large projects like Florence As It Was, which aims to reconstruct the artistic environments of dozens of structures from the thirteenth, fourteenth, and fifteenth centuries, as most current software tools require prohibitively lengthy workflows to achieve suitable results. This novel workflow simultaneously reduces the size of a point cloud model to make it accessible to the public, but still allows for the sufficient rendering of structures to make meaningful visualizations of artworks that were once inside them. This allows for the production of virtual reconstructions of pre- and early-modern spaces as their designers originally intended. These reconstructions permit scholars to analyze current theories proposed in the literature, test new hypotheses that posit alternative artwork arrangements, examine iconographic references between individual images, and formulate relational connections between images that have been removed and relocated to distant sites. Furthermore, they provide scholars and the public direct access to spaces and objects to visit and research artworks and historically significant spaces with unlimited time constraints. These hybrid models are also annotated with texts produced by art historians about the sites and the artworks, which enrich the scholarly and public experience. An example of this workflow may be seen in the case study of Orsanmichele, a church in the center of Florence, Italy that was built and decorated in the fourteenth and fifteenth centuries (Figs. 1 and 2). The results of this work are hosted at the project website at https://3d.wlu.edu/v21/pages/orsanmichele2.html.

Material and methods

Church of Orsanmichele and its Paintings

This case study involves the 3D modeling of the three-story building of Orsanmichele, located in the heart of Florence, Italy. The building of Orsanmichele was used as a granary until it was transformed into a church in 1336. During the fourteenth century, a number of panel paintings were produced and installed on the twelve piers that serve as the building’s foundation. All three floors, a connective staircase, and the entire exterior were scanned, and five paintings that were known to be originally installed on interior piers (but were removed in the early fifteenth century) were modeled (Fig. 3).

Five painted panels that once were displayed in the interior of Orsanmichele: a Jacopo del Casentino, Saint Bartholomew, Galleria dell’Accademia, Florence; b Andrea di Cione, Saint Matthew with Scenes from the Legend of Saint Matthew, Uffizi Galleries, Florence; b Matteo di Pacino, Devotional painting for a Florentine guild (Guild of Doctors and Apothecaries?): Saints Cosmas and Damian; [Left predella]: Miracle of the Transplantation of the Black Leg; [Right predella]: Decapitation of Saints Cosmas and Damian, North Carolina Museum of Art, Raleigh, NC; (4) Lorenzo di Bicci, Saint Martin and Saint Martin Dividing His Cloak, Galleria dell’Accademia, Florence; (5) Giovanni dl Biondo, Saint John the Evangelist and the Apotheosis of Saint John the Evangelist, Galleria dell’Accademia, Florence

LiDAR

To create a 3D color model of the buildings at historical sites like Orsanmichele, data were collected with two portable LiDAR (light detection and ranging) systems from Leica Geosystems—the BLK360 and the RTC360 (Fig. 4). Both are tripod-mounted and use laser range finding combined with 360-degree photography to create color point clouds of scanned areas. The two systems differ in the speed of collection, range, and the accuracy of the resulting cloud. The BLK360 has a range of 60 m, a data collection speed of 360,000 points/second and a rate of accuracy of 6 mm at 10 m. A single medium density scan takes roughly five minutes to complete, and its compact size makes it both portable and efficient on site. However, the RTC360 far surpasses the specifications of the BLK360. With a range of 130 m, a collection speed 2,000,000 points/second, and an accuracy rate of 1.3 mm at 10 m, the RTC360 completes each scan much faster than the BLK360 model (one Medium Density scan takes less than two minutes to complete).

Photogrammetry

Structure-from-motion (SfM) photogrammetry was used to generate the 3D color models of artworks. SfM photogrammetry is the process of acquiring 3D data and color information from multiple photographs taken from various positions and angles. Objects of interest, such as paintings or sculptures, are photographed in sequence in a series of rows moving across their surfaces and from all orientations, including above, below and the sides, with care taken to maintain a 70% overlap between each capture (Fig. 5). Normally, a digital camera, tripod, and additional external lighting (when there is insufficient ambient lighting) are used. For the dossal panel of Sts. Cosmas and Damian, a Nikon D610 full frame digital SLR camera was paired with Zeiss Makro-Planar 50 mm lens mounted on a tripod. However, initial tests were conducted on four of the panels from Orsanmichele—Jacopo del Casentino’s Saint Bartholomew, Andrea and Jacopo di Cione’s Saint Matthew, Lorenzo di Bicci’s Saint Martin, and Giovanni del Biondo’s Saint John the Evangelist—with the camera in an iPhone 8 Plus cell phone, with promising results (Fig. 3). Often during this step scale bars are placed in the camera frame to give real world dimensions to the finished model. In our test the artworks were part of an active exhibition, and it was therefore impossible to attach scale bars to the wall during photography. Instead published dimensions were used to establish the scale of each artwork. The photographic datasets for photogrammetry were initially processed using the Adobe Camera Raw software tool to adjust the white balance and exposure. No image sharpening, cropping, or image distortion correction were done, as these modifications would affect the quality of the finished model [29]. After adjustment, high quality JPEG images were exported for processing with the photogrammetry software.

Software tools

Metashape Professional (version 1.8.1) from Agisoft corporation was used to create textured photogrammetry models. Digital Lab Notebook (DLN): Capture Context (version 1 Beta), an open-source project by Cultural Heritage Institute, was used for tracking metadata in photogrammetry projects. Adjustments to photos were made with Adobe Camera Raw (version 10.4.0.976) by Adobe Corp. Cinema 4D (version S23) was used to trim textured mesh models and make minor edits prior to incorporating them into the hybrid model. Leica Register 360 (version 2020.1) from Leica corporation was used for the registration and editing of LiDAR point cloud data. The open-source program Cloud Compare (version 2.10.2) was used for format conversion and final decimation of LiDAR data. A Potree Converter (version 2.1) was used to create a hierarchical data structure from the decimated point clouds. Potree (version 1.8) is currently being used on the server to handle delivery of the point clouds via web browsers.

Results

In this section, the optimized workflow to produced hybrid models of buildings and their associated original artworks is presented, along with an example case study of Orsanmichele with the panel paintings it contained at the end of the fourteenth century.

The 3D reconstruction workflow and methodology

The workflow is a bifurcated process that constructs accessible hybrid 3D models of historic sites (of buildings and their associated artworks) in fourteenth-century Florence, summarized in Fig. 6. While a variety of different methodologies can be used to collect 3D color data of artworks and buildings, LiDAR with associated color information was selected to acquire data of buildings and SfM photogrammetry was used to collect 3D data of artworks associated with the buildings based on art historical research. Key goals of this workflow were to ensure that the hybrid 3D model could be explored using personal computers and laptops, as well as reducing the amount of time and labor needed to produce these integrated models. The simplest approach would be to render both buildings and artworks as textured models; however, the rendering of entire buildings—such as Orsanmichele—is too time intensive to complete in a reasonable time, particularly since the goal of the project is to capture the appearance of roughly forty structures in Florence. Thus, a compromise was made to keep buildings as point cloud models, but to create textured models of artworks. In so doing, the workflow for the buildings was designed and optimized to reduce the number of data points in the final point cloud. The reduction of the size of point cloud files and the implementation of the Potree program has made these products accessible to the public. But to make them more useful for both research and educational outreach, carefully researched auxiliary data was added in the form of text annotations.

LiDAR data collection of buildings

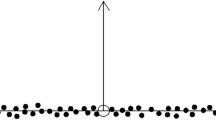

A LiDAR scanner obtains distance information from the position of the scanner to the object in its direct line of sight. The 3D model is obtained by scanning through a wide variety of angles. To create 3D color models here, the chosen LiDAR units scan twice, with the first scan collecting data from the laser and the second capturing color information using cameras. Multiple scans must be completed around the exterior of a structure, through doorways and passages that connect one space to another, inside each room of a building, and with care taken to capture areas from different angles and with each scan positioned so that it aligns with the previous scan. The number of scans depends on the size of the space and the obstacles present.

LiDAR data post-processing

The multiple LiDAR scans of a building need to be combined and edited. This was done using Leica Register 360 software, which is used to fine-tune the alignment of scan positions and clean the point clouds by deleting specific sets of unwanted points [30]. These unwanted points are due to various factors. Cultural heritage sites tend to be open to the public, and stanchions, cords, and rope lines are often placed in the middle of these sites for purposes of crowd control, while educational materials are similarly installed in these spaces to explain images and objects of interest. Human beings often wander through an area during the scanning process, leaving behind floating groups of points that recall their shapes (but not their identities). The editing process involves removing all discolored sections of point clouds caused by over- or underexposed photographs, unwanted human forms, and anachronistic objects that do not belong—fire extinguishers, didactic materials, chairs, and stanchions, for example.

Once the point cloud has been cleaned, the size needs to be reduced to make the final building model accessible on a personal computer. A full resolution point cloud for an entire building complex comprised of multiple structures might contain ten billion points or more, which is too large to be shared online. Register 360 is used to reduce, or decimate, the number of points in a model to a manageable size. This process impacts the appearance of the model minimally, as full resolution models have many duplicated points. The final number of points in the decimated model depends on a number of factors, most importantly the physical size of the structure and the number of interior and exterior spaces that are scanned. Orsanmichele, for example is a relatively small structure—it has three floors with a single room on each floor. The decimated model for this building contains 350 million points. By way of comparison, another architectural environment scanned as part of the Florence as it Was project—the mendicant complex of S. Maria Novella—required a reduction to 1 billion points to achieve a similar density due to its larger physical size. Therefore, the number of points needed to create a viable decimated point cloud model is determined by the structure itself and is realized through a process of trial and error. This visualization of the point cloud model is facilitated by a program called Potree, discussed below.

Photogrammetry of artworks

The photographs necessary to create the color 3D models were collected according to the procedure previously described and outlined in Table 1. The needed spatial resolution to visualize the works of art drives the number of photographs acquired during data collection. The subsequent conversion of the photographs into a polygon model is done with Agisoft Metashape [31]. The color-corrected photographs, in JPEG format, are first imported and then aligned in a 3D space using the settings “high accuracy,” “generic preselection,” “40,000 key point limit,” and “4,000 tie point limit.” After alignment, the orientation and position of the resulting point cloud is corrected to ensure that coordinates have been centered on the zero point of the world coordinates and that the point cloud is perpendicular to the ground plane. This step makes it easier to eventually align the artworks with the point cloud of the building. After orienting the model in the coordinate system, a dense cloud is created using the setting “high quality” and a mild or moderate depth filtering. The size of the dense point clouds of artworks typically ranges from 1 to 10 million points. Ornate 3D objects, like sculptures or multi-paneled altarpieces, often feature more points than single panel paintings. Once the point cloud has been constructed, the model is given a scale. Since scale bars were not included in the photographs, points were placed on the model in locations of the known dimensions of the artwork. These points were used in the “Scale Bars” section in Metashape to establish a real-world scale. The point cloud is then cleaned by deleting parts of the model that are not required in the final version, such as walls, ceilings, or floors.

The next step in the process is to create a polygon mesh from the point cloud using “Depth Maps” as the source, the surface type set to “Arbitrary(3D),” quality set to “High” with a custom face count, and a “mild” depth filtering. The number of polygons depends on the complexity of the real-world object. Flat surfaces with few protrusions or projections may be formed with relatively few polygons, while those with complex surfaces will require many more. The number of polygons created in this step is minimized to speed their loading when fitted into point clouds. The minimal loss of detail is not important in the final reconstruction, as the purpose of the 3D models of artworks is to give the viewer an impression of what the object might have looked like in its original setting, not to document minute surface details with a precise level of pinpoint accuracy. The polygon counts for the artworks in the case study range from about 50,000 to over 200,000 (Table 1). A photographic texture is then added to the mesh. In Metashape, pieces of the original photos are stitched together to create a 2D image that is wrapped onto the 3D file to give the model the appearance of the original object. Textures are created using “Diffuse map” as the texture type, “Images” as the source data, “Generic” as the mapping mode, and “Mosaic” as the Blending mode. The texture size varies depending on the size and complexity of the artwork—either 4 K (4096 x 4096) or 8 K (8192 x 8192). Metashape has tools for cropping and editing the finished textured mesh, but it often leaves jagged edges and imperfections around the outside of the model. To clean these outside edges the rendered model is exported to a 3D design. Florence As It Was uses Wavefront.OBJ for transferring and editing and Cinema4D by Maxon for final trimming and cleanup [32]. After trimming and exporting from Cinema 4D, the file is ready to be incorporated into Potree to combine with the building point cloud. Table 1 shows some of the details of the output textured mesh for the artworks.

Creating and viewing a hybrid 3D model in Potree

Potree, a suite of open-source tools for encoding and delivering large point clouds over the internet, was used to combine the 3D textured models of artworks and the point clouds of the building to make the reconstructions accessible to the public online [33, 34]. The Potree encoder uses a multi-resolution octree algorithm to organize a building’s point cloud into a 3D octree data structure. When viewed with the associated Potree viewer, this system delivers a fraction of the total points based on the user’s view direction and zoom level, making it much more efficient than loading a full point cloud. In addition, the user maintains control over the total number of points loaded and several quality parameters giving them control over the amount of computer resources used by the web interface. It is not uncommon for the size of the decimated point clouds for buildings created by Florence As It Was to exceed 10 Gigabytes. This is too large a file to load completely into memory without slowing down a typical personal computer. Potree delivers a subset of the points based on the user’s zoom level and view direction which limits the local memory usage, while maintaining quality.

The decimated point cloud from Leica Register 360 is converted into the .LAS format using an open-source tool called Cloud Compare [35]. The command line program Potree Converter is used to encode the .LAS file into the octree format that can be delivered via a web server [36]. This step of the process creates a folder containing three files: “Octree.bin” which contains all of the point data in un-ordered nodes, “hierarchy.bin” which contains the hierarchy to order the nodes in the previous file, and “Metadata.json” which contains data necessary to load and display the points. These applications are available on the Potree GitHub page at https://github.com/potree.

To deliver the content publicly, an Apache web server was provisioned on a virtual machine at Washington and Lee University and mapped to a custom URL: “3d.wlu.edu.” To set up the server for hosting Potree content, all the necessary libraries and dependencies specified in the Potree resources GitHub repository has been copied to the HTML directory of the web server.

Encoded point clouds and polygon models are delivered via HTML and scripts in a standard web page. The Potree Converter can generate a basic web page that includes the necessary libraries and scripts to display the point cloud and viewer controls. The viewer’s look and feel are controlled using cascading style sheets, while parameters of default settings like point cloud quality can be controlled through HTML. Ancillary open-source libraries extend the functionality of the viewer. One of these open-source libraries, Three.JS, allows for the placement of polygon models alongside the point cloud content. The Potree install includes a rich set of example pages that illustrate alternative types of content that can be added, including textual and image-based annotations. The complete syntax of any Potree webpage can be viewed using the “view source” feature in a web browser. The annotated source code for our case study page can be seen in our GitHub repository (this is a simple example page containing one point cloud, five polygon models and six textual annotations) [37]. The code has been commented to highlight the function of various sections.

Annotations: adding meaning to models

Annotations for models are essential components of this project and come in a variety of forms. These annotations may be translations of early descriptions, transcriptions of archival material, or original content written by art historians who participate as members of the Florence As It Was team: They may also include photographs and/or film clips if and where appropriate.

Art historians and their assistants review the extant literature on structures scanned and the images photographed for 3D models. Of primary value are descriptions that were written in the seventeenth, eighteenth, nineteenth, and twentieth centuries by Italian and German critics and historians who identified the objects and images that were placed inside those structures at the time their descriptions were published [38,39,40]. These texts are often dense and difficult to understand, due to the decorative appearance of the buildings under examination that were in their descriptions. When compared side by side, however, they provide a sequence of chronological snapshots that show the evolution of a building’s decorations over time. If Giuseppe Richa describes the interior of Orsanmichele one way in 1757, but Luigi Passerini describes it differently in 1860, we have a sense of how images were moved inside the structure between the time of the two writings and thus how its program of visual material changed from one period to another.

A second kind of annotation comes in the form of the transcription of original documents that have been published by scholars that address various issues associated with a building or the objects connected to it. The wealth of material available to the scholarly community in the ample contents of the Archivio di Stato in Florence provide a seemingly ceaseless flow of information from primary sources. These documents can often (but not always) confirm the locations of these objects, the dates of their commission and/or installation, and the identities of the artists and/or patrons who were involved in those projects.

Using as sources the findings of previous scholars and the primary archival material they used to arrive at their conclusions, art historians produce short explanatory essays that detail specific features of buildings and the objects inside them which are then embedded into Potree and photogrammetric models (Fig. 7). These essays include basic information and data based on scholarly consensus, as well as brief summaries that might explain the iconographic content of an image, the way in which a liturgical object was used, the highlights of the artist’s career, the interests of the object’s patron, or the ways in which the image or building may have been interpreted in the past within the context of others in its immediate vicinity.

Annotation for panel attached to pier in Orsanmichele: Matteo di Pacino, Devotional painting for a Florentine guild (Guild of Doctors and Apothecaries?): Saints Cosmas and Damian; [Left predella]: Miracle of the Transplantation of the Black Leg; [Right predella]: Decapitation of Saints Cosmas and Damian, circa 1370–1374

Creating and embedding annotations in the model is of fundamental importance in this project. Point cloud and photogrammetric models present users with important information when they stand alone, but annotations provide context for those models, detailed information to help users understand what they are seeing, and intellectual tools to help them both critique the interpretative reconstructions inserted into models and to venture forth with interpretations of their own. Without annotations, models of cultural heritage sites have no meaning, no historical value, and no purpose beyond the level of being mere digital curiosities.

Annotations are added to the point cloud model using the “Annotation” function from the Potree.js library. Basic annotations can display a title and a description and can contain standard HTML elements like links, jpgs, or film clips. Annotations must include coordinates for the annotation, a camera position, and a camera target. The coordinates will fix the annotation in the model, while the camera position and target will direct the user to a set viewpoint when the annotation is clicked in the 3D scene. The coordinates for the annotation can be located using the Point Measurement tool in the Potree Viewer. The camera position and target can be found by navigating to the desired view in the Potree Viewer, then selecting “Camera” under the scene objects section of the menu. The values will appear in the “Properties” section of the menu.

Case study

The case involves the 3D color rendering of the three story building of Orsanmichele, the former granary that burned in 1304, was rebuilt soon thereafter, and was decorated with paintings and sculptures after its transformation into a church in 1336. This example can be found on the project website at https://3d.wlu.edu/v21/pages/orsanmichele.html.

The church of Orsanmichele is located in the center of Florence along the Via dei Calziauoli, a major urban avenue that links the civic center of city (the Palazzo della Signoria, once occupied by its publicly elected governors) to its spiritual center (the cathedral and baptistery). Originally used as a monastery, the three-story rectangular building was converted into a grain loggia in the middle of the thirteenth century [41]. But when a painting affixed to one of the loggia’s columns began to work miracles in 1292, the space was quickly transformed into a popular cult center [42]. An arsonist’s rage resulted in the destruction of the structure in the fire of 1304, but Orsanmichele was quickly rebuilt, probably as early as 1315. In 1336 the building was refashioned into a church, with the twenty-one guilds of Florence pledging to support it by commissioning devotional artworks to decorate its interior and exterior. A painting of the Madonna and Child, produced by Bernardo Daddi, was installed there in the month of June 1347 and immediately began to work miracles of its own (Fig. 8). To celebrate its importance, an elaborate marble tabernacle was carved to house it during the decade of the 1350s, and by 1365 the miracle-working image in Orsanmichele, now the church of the guilds, was recognized as the official painting of the city [43].

In keeping with the legally binding agreement they had made, the guilds of Florence arranged for local painters to produce effigies of their patron saints to surround the miracle working painting of the Madonna and Child (Fig. 3) [44]. The earliest of these, dedicated to the figure of Saint Bartholomew, was painted by an artist named Jacopo del Casentino on behalf of the Delicatessen Owners Guild and installed in Orsanmichele sometime around the year 1340 [45]. Andrea di Cione and his brother, Jacopo, painted a triptych for the Bankers Guild that was dedicated to Saint Matthew and installed in 1369 on a pier near the cult image of the Madonna and Child. Matteo di Pacino’s panel of Saints Cosmas and Damian was installed on the pier maintained by the guild of Doctors and Apothecaries by 1375, at nearly the same time that Lorenzo di Bicci’s panel of Saint Martin appeared on the pier claimed by the Vinters guild. Giovanni del Biondo’s painting of Saint John the Evangelist, commissioned by the Silk guild, was installed next to Bernardo Daddi’s Madonna and Child by 1380. Nearly every side of the twelve piers inside the church of Orsanmichele held one of these dossal panels dedicated to a guild’s patron saint. Yet, for reasons that escape us still, this decorative program was deemed untenable by the church’s proprietors. By 1409 each of these panels had been removed and dispersed to allow an artist named Niccolò di Pietro Gerini to paint frescoes of these same figures in niches that were carved into the piers (Fig. 2). The original decorative program of Orsanmichele’s interior was therefore altered by these new additions.

Although the building of Orsanmichele still stands, and although the tabernacle and cult image of the Madonna and Child have never been moved from it, the remainder of the original decorative program inside the granary-turned-church has been stripped away forever. The dossal panels that have survived, now maintained in art museums on either side of the Atlantic Ocean, can be seen in neither their intended setting nor in relation to the other dossal panels that joined them in the open space of Orsanmichele. This dispersal of imagery makes the cultural historian’s job of understanding the form and function of one of Europe’s most important medieval centers unusually difficult. The only way to see the appearance of this space as it was originally conceived is to create a hybrid model that fuses a reconstruction of the architectural space with models of the artworks that were intended to be seen there.

3D data collection and processing of Orsanmichele

LiDAR data collection of the external and internal rooms of the Orsanmichele were collected using two different commercial LiDAR units that also collect color information. The LiDAR scanners were used to capture data on all three floors of the structure, including the cult image of the Madonna by Bernardo Daddi (1347) and its surrounding tabernacle on the ground floor, the original sculptures of guild patron saints (1340–1463) in the museum on the primo piano, the rafters above the secondo piano, and the winding staircase on the northwest corner of the building that connects all three floors [46]. The 3D and color information of the exterior the Orsanmichele was collected with LiDAR units as well. The full point cloud model of this building contains 2.7 billion points, whereas the decimated version available online and that appears in the illustrations of this article contains 326 million points, a significant reduction that makes the viewing of this model manageable on any user’s personal computer.

3D Data collection and processing of artworks associated with Orsanmichele

Paintings known to have been installed inside Orsanmichele between 1340 and 1400 were photographed, modeled, and annotated through the workflow previously described in the section titled “LiDAR data collection of buildings” (Fig. 9). These paintings include three works now in the Florentine Galleria dell’Accademia—Giovanni del Biondo’s dossal of Saint John the Evangelist commissioned by the Silk Guild, Lorenzo di Bicci’s painting of Saint Martin for the guild of the Vintors, and Jacopo del Casentino’s panel of Saint Bartholomew for the Delicatessen Owners—as well as the Saint Matthew Triptych by Andrea and Jacopo di Cione for the Bankers Guild, now in the Uffizi Galleries (Fig. 3) [47,48,49,50]. These models were then stitched into the point cloud model of Orsanmichele created from the LiDAR scans and pinned to the pier upon which each had originally been placed (Fig. 10). The model of the Saint Matthew Triptych was then annotated with short commentaries.

This digitally reconstructed space directly impacts the way scholars approach their study of this artistic environment. The discipline of art history demands a rigorous application of visual observation that influences and guides the scholar’s analytical study and inquiry. Having the ability to see the painting of St. Matthew hanging in its original location next to the other paintings and objects that were always intended to be seen alongside it in Orsanmichele immediately alters our perception of the image and our interpretation of its message and importance. No longer examined only as single images that stand alone on museum walls, panels from the fourteenth-century guild church can now be studied as they were meant to be seen—as parts of a larger ensemble of effigies of Apostles, Evangelists, and Early Christian Saints that were set in specific positions around the miracle-working cult image of the Madonna of Orsanmichele. One can now see, for example, the emphasis that was placed on scenes of martyrdom in predella and apron panels in many of these images, and how the representation of the elegantly dressed Matthew corresponded to the more simply garbed figures of Martin, Bartholomew, and John the Evangelist—currently in the Accademia Gallery—that once occupied the piers surrounding Andrea di Cione’s painting, now in the Uffizi Galleries. One can also see the effects of the glittering gold ground paintings hanging on the twelve piers that once comprised the interior decorations of Orsanmichele before their removal in the early fifteenth century.

The successful test of this process led to the addition of a fifth painting to this group of dossals from Orsanmichele. A panel currently located in the North Carolina Museum of Art representing Sts. Cosmas and Damian, the patrons of the guild of doctors and apothecaries, has been connected in the scholarly literature to one of the piers of Orsanmichele [51]. This painting was photographed, edited, and modeled (Fig. 11). The photogrammetric model was inserted into the point cloud model of Orsanmichele on the pier maintained by the guild that commissioned the work from Matteo di Pacino, and an explanatory annotation was written and then attached to the point cloud model next to the panel (Fig. 7) [44]. English translations of descriptions of Orsanmichele written in Italian by Giuseppe Richa (1754) and Luigi Passerini (1860), as well as a translation into English of the analysis of the building written in German by Walter and Elisabeth Paatz (1955), are currently underway, and transcriptions of selected documents previously published in 1996 by Diane Zervas will be added as annotations [52].

The hybrid 3D model presented in our case study lacks some of the fidelity of the original data set. Decimation of the LiDAR data lowers the density of the point cloud, and the SfM photogrammetry models were made with lower polygon counts and smaller textures than is possible with the photographs. While this may seem to threaten the integrity of the overall visualization of the total product, the resulting model has a satisfactory appearance and enough points to easily make accurate measurements. Most importantly, the utility of combining multiple data types and of making the model easily accessible online offsets small reductions in spatial resolution.

Discussion

Although point cloud and photogrammetric models of buildings and artworks have been produced before, Florence As It Was developed a novel workflow that produces a highly accessible hybrid model that combines these two types of models. Color point cloud models of historic building created using LiDAR and associated photogrammetric works of art have been combined to give the best of both worlds: architectural spaces reconstructed with high spatial accuracy containing artworks at a suitable spatial resolution to recreate virtual historic spaces as they appeared before the year 1500. The choice to use point clouds for the architectural portion of these model is mainly one of convenience. It is possible to create textured mesh models of large architectural spaces from LiDAR point clouds, but it is very time consuming and technically challenging. Given the large scale of the project undertaken by Florence As It Was, it would be prohibitive to add that step to the workflow. The detail in the colored point cloud models is more than sufficient to give the viewer a sense of the architectural space in which the artworks—the main focus of the final product—are placed.

These hybrid models, stored on a secure server hosted at a home institution, may be accessed through a web browser by any user. No longer stored for the exclusive use of curators, restorers, or museum directors, these models may now come into the public realm for the edification of the general public and the scholarly research internationally, gratis. Thus, this workflow has enabled us to create a virtual space of Orsanmichele that may be traversed and inspected with navigational and measuring tools already included in the Potree interface (Figs. 12 and 13). The 3D models of the panel paintings now in the collections of the Accademia, Uffizi, and North Carolina Museum of Art can be reunited, examined, and discussed in a new context by rendering them into their original locations within the architectural model to recreate the intended ambiance of the space that has not been available to modern eyes since 1409 (Fig. 10). Cultural heritage professionals, scholars, and students may test the published hypotheses found in the scholarly literature without traveling abroad, negotiating with bureaucratic agencies for permissions, or embarking on cumbersome exercises on site.

Future work

The Florence As It Was team intends to model no fewer than forty extant structures designed and built in Florence before the year 1500. The majority of this work will be completed during the 2022–23 academic year. Each model will be attached to (and highlighted on top of) its corresponding reference in the Buonsignori Map that appears on the landing page of http://florenceasitwas.wlu.edu. When appropriate, each building will then be populated with the photogrammetric models of the artworks that have been created from photographs taken by team members and modeled at Washington and Lee University. The database will be updated regularly, with geographic coordinates included to permit users to map the presence of historical figures, objects, and the locations of activities in the city according to filters selected by each user. Efforts will be made to combine this data with that collected by the Digital Humanities teams of Digital Sepoltuario, Florence4D, DECIMA, and CATASTO with the goal of creating a single 2D map that contains artistic, architectural, social, economic, political, familial, and religious information to form a single Florentia Illustrata site hosted by the Harvard University Center of Italian Renaissance Studies at the Villa I Tatti.

Conclusions

The workflow employed by Florence As It Was demonstrates that approaches to digital humanities in general and digital art history in particular have only begun to scratch the surface of their true potentials.

The reconstruction methodology for creating a hybrid model with two distinct types of spatial data—one for cultural heritage architectural sites and the other for artworks and objects that were once installed there—coupled with historical annotations introduces three unique opportunities for cultural heritage researchers. First, it establishes a workflow that permits researchers to combine multiple data types into a single spatial interface. The data can include point cloud models produced from laser scans, textured mesh models, text annotations, video, and sound. Second, this process enables designers to test their hypotheses digitally when reconstructing architectural, artistic, or archaeological sites of their own. Third, it provides professionals, students, and researchers direct access to spaces and objects rendered in these models, thus granting unfettered time for study to both scholarly and general audiences.

The work to catalog museum collections, provide data and information about spaces and objects, and map that information onto 2D surfaces have provided users with invaluable resources with which to consider their materials. However, digitized models of cultural monuments can provide restorers with additional data that can have real-world consequences, as was the case recently when the accurate point cloud rendering of Notre Dame Cathedral made by Dr. Andrew Tallon in 2013 was employed to guide the direction of the reconstruction process necessitated by the devastating fire of 2019 [53].

Placing these artworks in their original settings using digital tools that can be accessed by anyone, anywhere, at any time now expands both our understanding of an artwork’s original context and the accessibility of this information to the public. The workflow we have created can be replicated with relative ease by any institution that has the personnel, the time, and the budgetary flexibility to invest in the necessary equipment and software to recreate the spaces and decorations of the world’s cultural monuments with a level of precision never before attainable in the analog world.

Availability of data and materials

Coding data may be consulted at https://github.com/florenceasitwas/potree-21-pages/blob/fc0ca16f6be477a4f6cf060cb5d8252868ca2656/orsanmichele.html. The 3D models produced by Florence As It Was are available the project website at https://florenceasitwas.wlu.edu/maps.html and https://sketchfab.com/FLAW/models. Data pertaining to objects and structures examined in this article may be made available upon request of their proprietors.

References

Besl PJ, McKay ND. Method for registration of 3D shapes. Sens Fusion IV. 1992;1611:586–606.

Li Z, Chen J, Baltsavias E. Advances in photogrammetry, remote sensing and spatial information sciences; ISPRS Congress Book 2008. London: Taylor & Francis Group; 2008. p. 527.

Li D, Shan J, Gong J. Geospatial technology for earth observation. New York: Springer; 2009. p. 558.

Li Y, Liu C. Applications of multirotor drone technologies in construction management. Int J Constr Manag. 2019;19:401–12. https://doi.org/10.1080/15623599.2018.1452101.

Grun A, Remondino F, Zhang L. Photogrammetric reconstruction of the Great Buddha of Bamiyan, Afghanistan. Photogramm Rec. 2004;19:177–99.

El-Hakim S, Gonzo L, Voltolini F, Girardi S, Rizzi A, Remondino F, Whiting E. Detailed 3D modelling of castles. Int J Architect Comput. 2007;5:199–220. https://doi.org/10.1260/1478-0771.5.2.200.

Remondino F. Heritage recording and 3D modeling with photogrammetry and 3D scanning. Remote Sens. 2011;3(6):1104–38. https://doi.org/10.3390/rs3061104.

Guidi G, Russo M, Angheleddu D. 3D survey and virtual reconstruction of archeological sites. Digit Appl Archaeol Cult Heritage. 2014;1(2):55–69. https://doi.org/10.1016/j.daach.2014.01.001.

Lezzerini M, Antonelli F, Columbu S, Gadducci R, Marradi A, Miriello D, Parodi L, Secchiari L, Lazzeri A. Cultural heritage documentation and conservation: three-dimensional (3D) laser scanning and geographical information system (GIS) Techniques for thematic mapping of facade stonework of St. Nicholas Church (Pisa, Italy). Int J Archit Heritage. 2016;10(1):9–19. https://doi.org/10.1080/15583058.2014.924605.

Guidi G, Beraldin JA, Ciofi S, Atzeni C. Fusion of range camera and photogrammetry: a systematic procedure for improving 3-D models metric accuracy. IEEE Trans Syst Man Cybern B Cybern. 2003;33(4):667–76. https://doi.org/10.1109/TSMCB.2003.814282.

Bernardini F, Rushmeier H, Martin IM, Mittleman J, Taubin G. Building a digital model of Michelangelo’s Florentine Pieta. IEEE Comput Graphics Appl. 2002;22(1):59–67. https://doi.org/10.1109/38.974519.

Guidi G, Remondino F, Russo M, Menna F, Rizzi A, Ercoli S. A multi-resolution methodology for the 3D modelling of large and complex archaeological areas. Int J Architect Comput. 2009;7:40–55. https://doi.org/10.1260/147807709788549439.

Rodríguez-Gonzálvez P, Jiménez Fernández-Palacios B, Muñoz-Nieto ÁL, Arias-Sanchez P, Gonzalez-Aguilera D. Mobile LiDAR system: new possibilities for the documentation and dissemination of large cultural heritage sites. Remote Sens. 2017;9:189. https://doi.org/10.3390/rs9030189.

Angelini MG, Baiocchi V, Costantino D, Garzia F. Scan to BIM for 3D reconstruction of the Papal Basilica of Saint Francis in Assisi in Italy. Int Arch Photogramm Remote Sens Spat Inf Sci. 2017;42:47–54. https://doi.org/10.5194/isprs-archives-XLII-5-W1-47-2017.

Bini M, Battini C, editors. Nuove Immagini di monumenti fiorentini. Alinea: Florence; 2007.

Adamopoulos E, Colombero C, Comina C, Rinaudo F, Volinia M, Girotto M, Ardissono L. Integrating multiband photogrammetry, scanning, and GPR for built heritage surveys: the façades of Castello del Valentino. ISPRS Ann Photogramm Remote Sens Spat Inf Sci. 2021;8(1):1–8. https://doi.org/10.5194/isprs-annals-VIII-M-1-2021-1-2021.

Minaroviech J. Digitization of the cultural heritage of slovakia combining of lidar data and photogrammetry. SDH. 2017;1(2):590–606. https://doi.org/10.14434/sdh.v1i2.23286.

Ramos M, Remondino F. Data fusion in cultural heritage—a review. Int Arch Photogramm Remote Sens Spat Inf Sci. 2015;40(5):359–63. https://doi.org/10.5194/isprsarchives-XL-5-W7-359-2015.

Condorelli F, Pescarmona G, Ricci Y. Photogrammetry and medieval architecture using black and white analogic photographs for reconstructing the foundations of the lost rood screen at Santa Croce, Florence. Int Arch Photogramm Remote Sens Spatial Inf Sci. 2021;46(1):141–6. https://doi.org/10.5194/isprs-archives-XLVI-M-1-2021-141-2021.

Guidi, G., Malik, U.S., Frischer, B., Barandoni C., Paolucci, F. The Indiana University-Uffizi project: Metrologica! challenges and workflow for massive 3D digitization of sculptures. In: 23rd International Conference on Virtual System & Multimedia (VSMM). 2017;1–8, doi: https://doi.org/10.1109/VSMM.2017.8346268.

Guidi G, Beraldin J, Atzeni C. High-accuracy 3D modeling of cultural heritage: the digitizing of Donatello’s “Maddalena.” IEEE Trans Image Process. 2004;13(3):370–80. https://doi.org/10.1109/TIP.2003.822592.

Tallon A. An architecture of perfection. J Soc Archit Hist. 2013;72(4):530–54. https://doi.org/10.1525/jsah.2013.72.4.530.

Campana S, Francovich R, editors. Laser scanner e Gps, paessagi archeologici e tecnologie digitali. All’insegna del Giglio: Florence; 2006.

Madrigal, A. The Laser Scans that could help rebuild Notre Dame Cathedral. The Atlantic. 2019, https://www.theatlantic.com/technology/archive/2019/04/laser-scans-could-help-rebuild-notre-dame-cathedral/587230/?fbclid=IwAR12r3qgCfwkclPz0RQrKuth8nWy_UkinPtLYZf-oKcyYCyDk_KjwHuZw8g. Accessed 16 Apr 2019.

Reconstructing the destroyed church of San Maggiore, Florence. https://www.youtube.com/watch?v=ZUXa1nDtOB0 and http://florence4d.exeter.ac.uk/.

Adobe Camera Raw (Version 10.4.0.976) (2018). [Computer Plugin]. https://helpx.adobe.com/camera-raw/kb/camera-raw-plug-in-installer.html#fourteen_x

Register 360 (Version 2021.1.0). [Computer software]. https://leica-geosystems.com/

AgiSoft Metashape Professional (Version 1.2.6). [Computer Software] (2021). http://www.agisoft.com/downloads/installer/

Cinema 4D (version S23). [Computer Software] (2021). https://www.maxon.net

Schütz M, Ohrhallinger S, Wimmer M. Fast out-of-core octree generation for massive point clouds. Comput Graphics Forum. 2020;39:1–13.

Potree (Version 1.8). [Computer Software] (2021). https://github.com/potree/potree

CloudCompare (version 2.12) [GPL software] (2021). http://www.cloudcompare.org/

Potree Converter (Version 2.1). [Computer Software] (2021). https://github.com/potree/PotreeConverter

Richa, G. Notizie istoriche delle chiese fiorentine, vol. I. (Florence, 1754). Reprinted Rome, Italy: Multigrafica Editrice; 1989:10–13.

Passerini L. Storia degli Stabilimenti di Beneficenza della Città di Firenze. Florence: Le Monnier; 1853. p. 435–6.

Paatz, W., Paatz, E. Die Kirchen von Florenz, vols. 1–6. Frankfurt-am-Main: Vittorio Klosterman; 1952–1955.

Bent G. Public painting and visual culture in early republican Florence. New York: Cambridge University Press; 2016. p. 45–63.

Villani G. La Nuova Cronica. Parma: Fondazione Pietro Bembo; 1991. p. 628.

Del Migliore, F. Firenze: città nobilissima illustrata. Florence; 1684:534.

Taylor-Mitchell L. Guild commissions at orsanmichele: some relationships between interior and exterior imagery in the Trecento and Quattrocento. Explorations in Renaissance Culture. 1994;20:61–88.

Bent G. Public painting and visual culture in early republican Florence. New York: Cambridge University Press; 2016. p. 202–19.

Zervas D. Orsanmichele a Firenze. Modena: Cosimo Panini; 1996. p. 633–4.

Boskovits, M., Tartuferi, A. Dipinti Volume Primo: Dal Duecento a Giovanni da Milano. Giunti: Florence; 2003:104–108.

Boskovits, M.; Parenti, D. Dipinti, Volume Secondo: Il Tardo Trecento. Florence: Giunti; 2010:50-55, 69-73.

Offner, R. A Critical and Historical Corpus of Florentine Painting, Section 4, Vol. 1. New York: New York Institute of Fine Arts; 1962:17–22.

Taylor-Mitchell, L. Images of St. Matthew Commissioned by the Arte del Cambio for Orsanmichele in Florence. Gesta; 1992:31/I:54–72.

Chiodo S. A corpus of florentine painting: painters in florence after the ‘Black Death.’ The master of the Misercordia and Matteo di Pacino. Florence: Giunti; 2011. p. 470.

Zervas, D. Orsanmichele: Documents 1336–1452. Modena: F.C. Panini; 1996.

https://www.vassar.edu/stories/2019/190417-notre-dame-andrew-tallon.html Accessed 17 Sept 2021.

Acknowledgements

The authors wish to thank the following people and institutions for their intellectual support, diplomatic interventions, visionary guidance, and technical assistance: Niall Atkinson, Frate Bernardo, Larry Bird, Lorenzo Cafaggi, Cleaveland Candler, Donald Childress, Sidney Gause Childress, Sonia Chiodo, Marc Conner, Paola D’Agostino, Giuseppe de Micheli, Stefano Filipponi, Andrea Giordani, Erik Gustafson, Robert Hageboeck, Lena Hill, Elizabeth Holleman, William Hood, Patrizia Lattarulo, Anne Leader, Andrea Lepage, Paul Low, Chiara Marinelli, Alessandro Nova, Lorriann Olan, Kathleen Olson, Carol Overend, Catherine Overend, George Overend, Fabrizio Pacetti, Francesca Pacetti, Alina Payne, Giovanni Pescarmona, David Peterson, Gaia Ravalli, Mandy Richter, David Saacke, Francesco Sgambelluri, Gail Solberg, Nicholas Terpstra, Claudia Timossi, Gloria Tucci, Timothy Verdon, Melissa Vise, Gregory Walsh, Julie Woodzicka, Paul Youngman, and Carlo Zappia. The following undergraduate students at Washington and Lee University have been invaluable members of our team: Miles Bent, Ava Boussy, Sonia Brozak, Alice Chambers, Katherine Dau, Cadence Edmonds, Georgie Gaines, Colby Gilley, Mary Catherine Greenleaf, David Win Gustin, Samuel Joseph, Elyssa McMaster, Eloise Penner, Haochen Tu, and Aidan Valente. Catalena Bent (Oberlin College) assisted with LiDAR scanning in January 2018.

Funding

Support for the publication of this article was provided by the Class of 1956 Provost's Faculty Development Endowment at Washington and Lee University. A grant from the Mellon Foundation (grant number 21500631) was received by Washington and Lee University in 2015 to encourage faculty, staff, and students to engage in digital humanities projects. The initial seed money for Florence As It Was came from this source. A grant from the Richard Lounsbery Foundation for Biological Research (no grant number) supported travel and scanning expeditions to Florence in 2019 and 2020. Gifts from Donald and Sidney Gause Childress and from George, Carol, and Catherine Overend secured equipment purchases, national and international travel, and support funds in 2020 and 2021. Some student assistants also received support from Washington and Lee University’s Financial Aid office.

Author information

Authors and Affiliations

Contributions

Conceptualization; George R. Bent, David Pfaff, and Mackenzie Brooks. Methodology; George R. Bent, David Pfaff, and Mackenzie Brooks. Validation; David Pfaff. Formal analysis; George R. Bent. Investigation; George R. Bent. Data curation; David Pfaff and Mackenzie Brooks. Writing—original draft preparation; George R. Bent and David Pfaff. Writing—review and editing; Mackenzie Brooks, John Delaney, Roxanne Radpour, David Pfaff, and George R. Bent: Visualization; George R. Bent. Project administration; George R. Bent. Funding acquisition; George R. Bent. All authors have read and agreed to the published version of the manuscript. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no conflict of interest. The funders had no role in the design of the study; in the collection, analyses, or interpretation of data; in the writing of the manuscript; or in the decision to publish the results.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

About this article

Cite this article

Bent, G.R., Pfaff, D., Brooks, M. et al. A practical workflow for the 3D reconstruction of complex historic sites and their decorative interiors: Florence As It Was and the church of Orsanmichele. Herit Sci 10, 118 (2022). https://doi.org/10.1186/s40494-022-00750-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40494-022-00750-1

Keywords

This article is cited by

-

A high precision virtual restoration method for stone setting exemplified by Lingfeng Stone

npj Heritage Science (2025)

-

Digital restoration of ancient loom: evaluation of the digital restoration process and display effect of Yunjin Dahualou Loom

npj Heritage Science (2025)

-

3D scanning and modeling of highly detailed and geometrically complex historical architectural objects: the example of the Juma Mosque in Khiva (Uzbekistan)

Heritage Science (2024)

-

A high-precision automatic extraction method for shedding diseases of painted cultural relics based on three-dimensional fine color model

Heritage Science (2024)

-

Artificial intelligence for geometry-based feature extraction, analysis and synthesis in artistic images: a survey

Artificial Intelligence Review (2024)